Introduction

Information theory, as originally developed by Claude Shannon [1] to study communication systems is referred as to classical information theory (CIT) and it has found significant applications in chemistry due to its ability to quantify and analyze information in chemical processes and molecular systems. It provides tools for quantifying uncertainty and information content in chemical systems, aiding in the understanding of molecular interactions and reactions at a fundamental level [2]. By applying concepts such as entropy and mutual information, chemists gain insights into the structure and dynamics of molecules, which is crucial for predicting molecular behavior, interactions, and designing new materials and drugs [3]. Information theory techniques are also used to compress large datasets from chemical experiments and simulations, making data storage and transmission more efficient while reducing noise in experimental data for more accurate results [4]. It bridges thermodynamics and statistical mechanics by providing a formalism to describe the distribution of states in a system, leading to a deeper understanding of thermodynamic properties and phase transitions [5]. In computational chemistry, information theory helps optimize algorithms for molecular simulations and quantum calculations, playing a significant role in machine learning applications and enhancing the analysis and prediction of chemical phenomena [6]. Additionally, chemical informatics, which involves the storage, retrieval, and analysis of chemical information, heavily relies on information theory to improve the organization and interpretation of chemical data, facilitating advancements in research and industry [7]. Thus, information theory enriches chemistry by providing a rigorous framework for analyzing and interpreting chemical information, leading to deeper insights and more efficient methodologies in research and application.

In contrast to CIT, quantum information theory (QIT) [8] is an interdisciplinary field spanning theoretical physics and computer science that investigates how the fundamental principles of quantum mechanics can be utilized for information processing, transmission, and storage in ways that radically diverge from classical information theory. QIT in essence, examines the potential of quantum mechanical concepts and properties, such as superposition, entanglement, and uncertainty, to develop new ways to encode, manipulate, and communicate information that are unattainable for CIT within the classical realm governed by the laws of classical physics and traditional information theory.

Quantum information chemistry (QIChem) is an emerging field that combines quantum information science with chemistry to study and manipulate chemical systems at the quantum level [9]. This interdisciplinary approach promises to revolutionize our understanding of chemical processes and drive advancements in various scientific and industrial applications. At its core is the use of quantum computers [11], which harness quantum mechanics to solve complex chemical problems intractable for classical computers. These quantum computers offer unprecedented accuracy and efficiency in simulating molecular structures, reaction mechanisms, and energy states - capabilities crucial for predicting molecular behavior and designing new compounds.

Quantum simulations enable detailed and accurate modeling of quantum-level chemical processes, essential for drug discovery, materials science, and catalysis [12]. Quantum algorithms like Quantum Phase Estimation (QPE) and the Variational Quantum Eigensolver (VQE) [13] are designed to efficiently determine electronic structures of molecules by utilizing quantum superposition and entanglement. Moreover, quantum control techniques [14] manipulate quantum systems to achieve desired chemical outcomes, influencing reactions and bond formations for novel synthetic pathways and improved efficiencies. Applying quantum information concepts like entanglement and superposition provides new insights by elucidating the effects of quantum coherence on chemical dynamics [15].

The applications of QIChem are diverse, spanning drug discovery via simulating molecular interactions [16], materials design by predicting molecular structures and behaviors [17], improved catalysis through deeper understanding of catalyst-reactant interactions [18], energy storage technologies benefiting from insights into quantum material properties involved in energy transfer [19], and environmental solutions via simulations for pollution control and resource management [20].

However, challenges persist, including current quantum hardware limitations like high error rates, limited qubit counts, and the need for advanced algorithms to model large, complex molecules [21]. Overcoming these obstacles necessitates collaboration between quantum physicists, chemists, and computer scientists. This rapidly evolving field aims to improve quantum algorithms, develop more stable and scalable hardware, and discover new applications in chemistry and materials science [22]. QIChem has the potential to fundamentally transform our understanding and manipulation of chemical processes by leveraging quantum computing and mechanics for complex chemical problems, promising significant advancements in drug discovery, materials science, catalysis, energy storage, and environmental chemistry [23]. Despite ongoing challenges, research and technological developments could revolutionize the approach to chemical research and industrial applications.

Overview

This review explores the local aspects of the classical information (CIT) such as spreading, ordering, disequilibrium and complexity but also the non-local phenomena associated with entanglement (non-locality) through the quantum information-theoretic concepts (QIT) associated to the superposition of the states. These phenomena are analyzed on a variety of chemical systems, from atoms, ions, molecules to amino acids unveiling fundamental aspects of the chemical systems and the processes they exert, towards developing the emerging field of Quantum Information Chemistry (QIChem).

Here some advances and novel interpretations:

Shannon entropy analysis has predicted reaction mechanisms for elementary reactions of SN2 and hydrogenic abstraction type. We identified chemically important regions such as reactant/product (R/P) complexes, the transition state (TS), and others revealed only through IT measures, like the bond-cleavage energy region (BCER), bond-breaking/forming (BB/F) region, and spin-coupling (SC) process.

Quantifying complexity in physical, chemical, and biological systems is achievable through information-theoretic measures like Shannon entropy (S), Fisher information (I) along with disequilibrium (D), altogether help describing the complexity and information behavior of systems.

Our research has also been focused to explore elementary chemical reactions using information theory in 3D space, focusing on functionals like disequilibrium, Shannon entropy, Fisher information, and complexity measures such as Fisher−Shannon (FS) and López−Mancini−Calbet (LMC). The study uses the hydrogenic identity abstraction reaction to examine reactivity patterns through these functionals in both position (r) and momentum (p) spaces. The analysis reveals significant reactivity patterns around the intrinsic reaction coordinate (IRC) path, offering new insights into the reaction mechanism.

Information Theory analysis in 3D space of the dissociation process of the triatomic transition-state complex formed at the transition state of the hydrogenic identity abstraction reaction highlights interesting features of bond-breaking (B-B). The study shows that chemical reactions occur in low-complexity regions where various phenomena like B-B/F, BCER, SC, and TS converge.

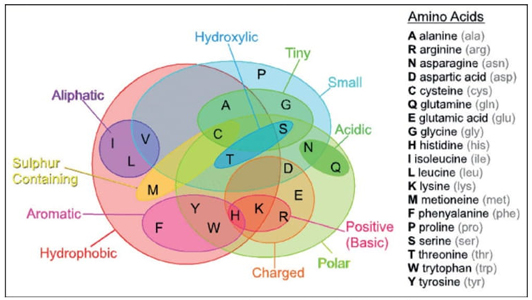

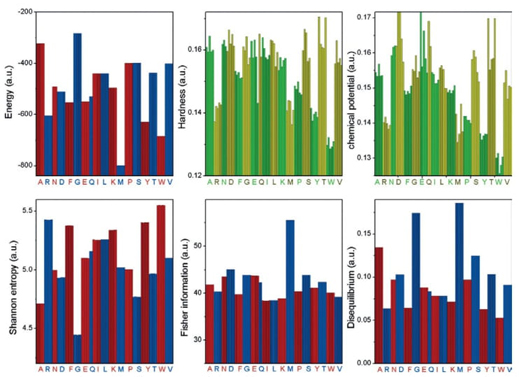

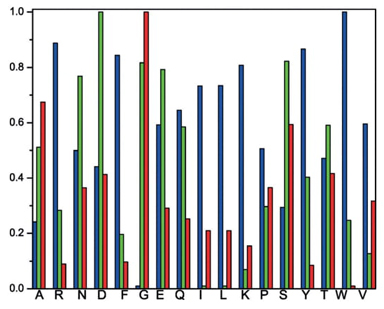

A pioneering information-theoretical analysis of 18 amino acids from bacteriorhodopsin (1C3W) was conducted. The Shannon entropy, Fisher information, and disequilibrium were used to characterize amino acids by their delocalizability, order, and uniformity, forming a scheme that classifies them into four major families: aliphatic, aromatic, electro-attractive, and tiny.

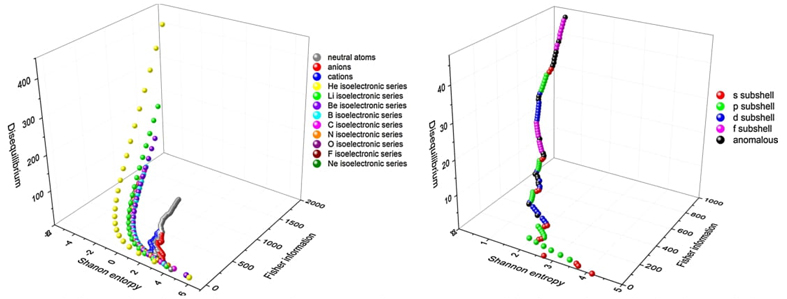

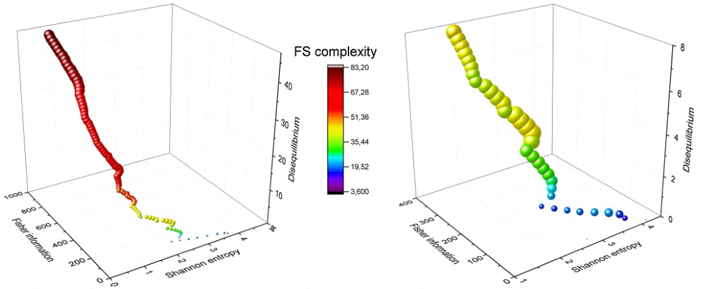

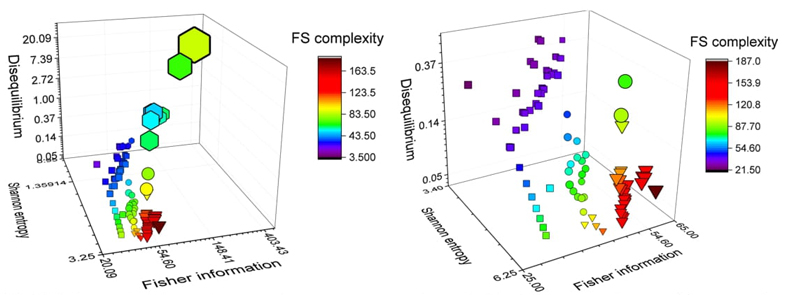

We propose a 3D Information-Theoretic Chemical Space using Shannon entropy, Fisher information, and disequilibrium measures to unveil unique aspects of many-electron systems, from simple molecules to complex systems like amino acids and pharmacological molecules. This space is based on the fundamental information-theoretic notions of delocalization, order, uniformity, and complexity.

We explore the partitioning of molecules into constituent parts using Atoms-In-Molecules (AIM) schemes and their information-theoretic justifications. We validated popular AIM schemes like Hirshfeld, Bader's topological dissection, and the quantum approach within this framework.

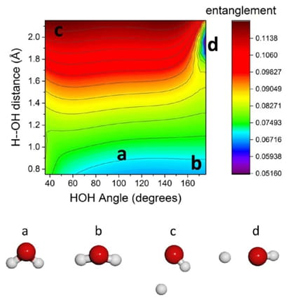

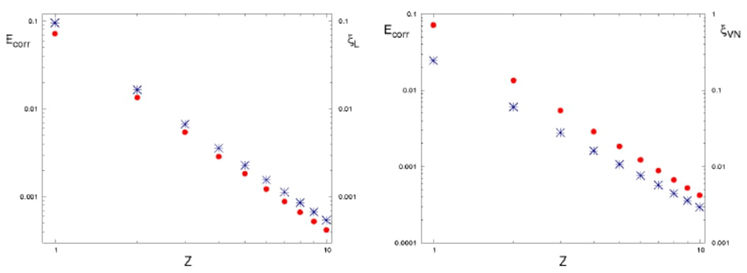

We discuss the quantum origin of correlation energy, relating it to entanglement as measured through von Neumann and linear entropies. For helium-like systems, a direct correlation between entanglement and correlation energy was observed. We provided numerical evidence of a linear relationship between correlation energy and quantum entanglement for the helium isoelectronic series.

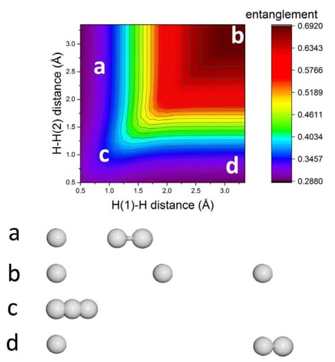

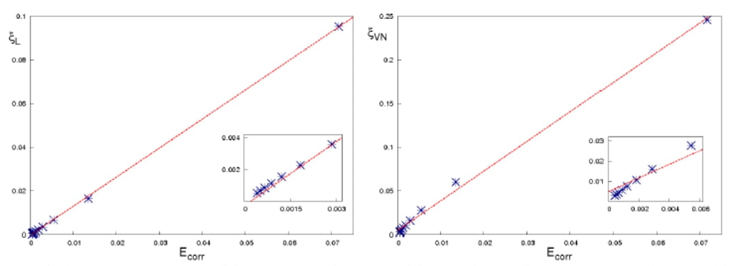

The study also delves into quantum entanglement aspects of the dissociation processes in homo- and heteronuclear diatomic molecules, using high-quality ab initio calculations. The behavior of electronic entanglement along the reaction coordinate aligns with significant physical changes in molecular density.

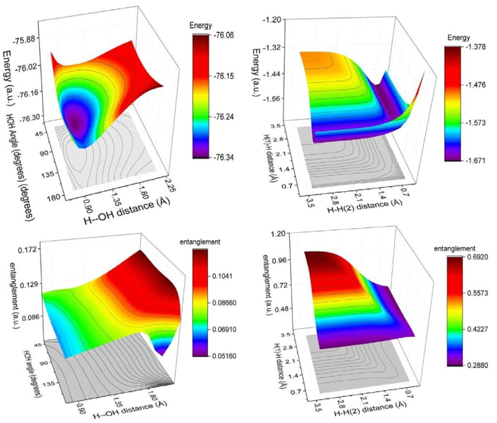

Finally, we investigate quantum entanglement, a profound manifestation of non-classical correlations between subsystems, suggesting that chemical systems inherently possess some level of entanglement, underscoring the importance of exploring its potential connection to chemical stability and reactivity.

The present review discusses the phenomenological description of several systems of higher complexity, from atoms and molecules of biological interest to dendrimers of nanotechnological nature. Furthermore, chemical transformations associated with reactivity theories are studied from the theoretical perspective of the “local” and “non-local” aspects of the pure state electron densities associated with those processes and the mixed states linked to the wavefunction superposition of the density matrix, respectively. This approach permits to employ two different information-theoretic schemes: (i) the classical description (locality) of chemical phenomena through CIT and also, (ii) the quantum effects associated to entanglement (non-locality) by use of QIT.

Henceforth, the analyses are separated into local and non-local phenomena covering several systems of higher complexity and processes associated with different type of chemical transformations:

Local Phenomena

Chemical Processes

Phenomenological Description of Two Center Reactions

Radical Abstraction Reaction (SN1)

Nucleophilic Substitution Reaction (SN2)

3D Complexity analysis of the Radical Abstraction Reaction

Molecules

Complexity and Information Planes of Selected Molecules

Predominant Information-Theoretic Quality scheme (PIQS) of amino acids

3D Information-Theoretic Chemical Space from Atoms to Molecules

The Separability Problem: Atoms in Molecules in Fuzzy and Disjoint Domains

Non-Local Phenomena

Quantum Entanglement

Correlation energy as a measure of non-locality: helium-like systems

Dissociation Process of Diatomic Molecules

Chemical Reactivity

Concluding Remarks

Chemical Processes

Theoretic-information measures of the Shannon type have been employed to describe the simplest hydrogen abstraction SN1 and the identity SN2 exchange chemical reactions [24]. These measures effectively detect the transition state and reveal bond breaking/forming regions. A numerical verification supports the argument that information entropy profiles possess more chemically meaningful structure than total energy profiles for these reactions. The results align with Zewail and Polanyi's concept of a continuum of transient states for the transition state, supported by reaction force analyses. Additionally, the information-theoretic description using Shannon entropic measures in position and momentum spaces allows a phenomenological description of these reactions' chemical behavior, revealing synchronous and asynchronous mechanistic behavior [25].

Informational-theoretic measures have also been applied to describe the course of a three-center insertion reaction [26], identifying transition states and stationary points that reveal bond breaking/forming regions not evident in the energy profile. These results further support the continuum of transient states concept. Fisher information measures can scrutinize the local behavior of chemically significant distributions in conjugated spaces, detecting transition states, stationary points, and bond breaking/forming regions for elementary reactions like the simplest hydrogen abstraction and identity SN2 exchange reactions [27].

The origin of the internal rotation barrier between eclipsed and staggered conformers of ethane has been systematically investigated from an information-theoretical perspective using the Fisher information measure in conjugated spaces [28]. Adiabatic (optimal structure) and vertical (fixed geometry) computational approaches were considered, following the conformeric path by changing the dihedral angle. The results showed that in the adiabatic case, the eclipsed conformer has larger steric repulsion than the staggered conformer, while in the vertical cases, the staggered conformer retains larger steric repulsion. These findings verify the feasibility of defining and computing the steric effect at the post-Hartree-Fock level of theory according to Liu's scheme.

Statistical complexity is of growing interest in physical sciences. A recent investigation examined the complexity of the hydrogenic abstraction reaction [29] using information functionals such as disequilibrium ( D ), exponential entropy ( L ), Fisher information ( I ), power entropy ( J ), and joint information-theoretic measures, including the I-D, D-L, and I-J planes and the Fisher-Shannon (FS) and LMC shape complexities. The analysis identified all chemically significant regions from the information functionals and most information-theoretical planes, including reactant/product regions (R/P), transition state (TS), bond cleavage energy region (BCER), and bond breaking/forming regions (B-B/F), which are not present in the energy profile. The complexities analysis showed that the energy profile of the abstraction reaction bears the same information-theoretical features of the LMC and FS measures in position and joint spaces. The study explained why most chemical features of interest, such as BCER and B-B/F, are absent in the energy profile and only revealed when specific information-theoretical aspects of localizability ( L or J ), uniformity ( D ), and disorder (I ) are considered.

Some other topics of interest have been incorporated in this part of the Review article: (i) concurrent phenomena occurring at the vicinity of transient state, (ii) and the Hypersurfaces of the information-theoretic functionals and complexity measures in 3D space for the radical abstraction reaction.

Phenomenological description of two center reactions

Predicting molecular structure and energetics during dissociations or chemical reactions is a major focus in theoretical and computational chemistry, spanning multiple research areas and driving the development of numerous theories and models extensively discussed in the literature [30]. A key aspect involves characterizing chemical processes in terms of physical phenomena like charge redistribution, bond breaking/forming, and reaction pathways.

Potential energy surface (PES) calculations at various levels of theory have been widely employed to understand the stereochemical course of chemical reactions [31]. These studies extract information about stationary points on the energy surface. According to the Born-Oppenheimer approximation, minima on the N-dimensional PES correspond to equilibrium molecular structures, while saddle points correspond to transition states and reaction rates. Since the inception of transition-state (TS) theory [32], significant efforts have focused on developing models to characterize the TS, crucial for understanding chemical reactivity.

Computational quantum chemistry addresses these challenges by defining "critical points" on a potential energy hypersurface, identifying equilibrium complexes or transition states. This approach utilizes first and second derivatives of energy (gradient and Hessian) relative to nuclear positions. Multiple minima on a contiguous energy surface allow for the construction of reaction paths, with the TS defined as the lowest maximum along these paths and a first-order saddle point. The eigenvector of the single negative eigenvalue at this critical point is the transition vector, guiding the steepest-descent path from the saddle point to the minima (reactants or products).

The unique reaction path can be defined using an intrinsic reaction coordinate (IRC), independent of the coordinate system, by employing classical mechanics in mass-weighted Cartesian coordinates [34]. The IRC is the path traced by a classical particle moving with infinitesimal velocity from a saddle point to the minima, aligning with the steepest-descent path in mass-weighted coordinates. Several computational techniques have been developed to calculate energy gradients and Hessians, allowing the following of such reaction paths [35].

Despite the utility of critical points on the energy surface for analyzing reaction paths, their chemical or physical significance remains uncertain [36]. The pursuit to understand the transition state (TS) structure represents a challenge in physical organic chemistry, although chemical concepts like reaction rate and barrier have been extensively studied. Efforts to achieve this have produced chemically useful TS descriptions, such as the Hammond-Leffler postulate [37]. Hammond proposed that points on a reaction profile with similar energies will also have similar structures, allowing TS structure predictions in highly exothermic and endothermic reactions. Leffler generalized this idea, considering the TS as a hybrid of reactants and products with an intermediate character. The Hammond-Leffler postulate remains a practical tool, stating that TS properties are intermediate between reactants and products, related to its position along the reaction coordinate.

With the advent of femtosecond time-resolved methods, these theories have gained renewed relevance. Since Zewail and co-workers' seminal studies [38], femtochemistry techniques have been applied to chemical reactions of varying complexity, providing new insights into fundamental chemical processes. As Zewail stated, femtochemistry allows observing "the very act of breaking or making a chemical bond" by "freezing" the molecular dynamics. Although most femtochemistry studies deal with excited states, ground-state processes have been studied as well. One promising technique, anion photodetachment spectra [39], has enabled direct observation of transition states. To explain the experimental results of femto-techniques, it will be necessary to complement existing chemical reactivity theories with electronic density descriptors of events occurring in the transition-state region, where bonds are formed or broken.

Numerous studies have employed various descriptors to investigate transition state (TS) structure or follow the chemical reaction path. Shi and Boyd systematically analyzed model SN2 reactions to study TS charge distribution in connection with the Hammond-Leffler postulate [40]. Bader et al. developed a reactivity theory based on charge density properties by employing the Laplacian, aligning charge concentrations with depletion regions by mixing in the lowest excited state to produce a transition density [41]. Balakrishnan et al. showed information-theoretic entropies in phase space peaked during a bimolecular exchange reaction [42]. Ho et al. found information measures revealed geometrical density changes not present in the energy profile for SN2 reactions, though not identifying TSs [43]. Knoerr et al. correlated charge density features with energy-based charge transfer [44], stability, and localization measures for an SN2 reaction, attempting a density-based reactivity theory [45]. Tachibana visualized bond formation using kinetic energy density to identify reactant, TS, and product shapes along the IRC [46], realizing Coulson's conjecture [47]. Reaction forces along the reaction coordinate have characterized structural/electronic changes [48]. The Kullback-Leibler deficiency has been evaluated along internal coordinates and IRCs for SN2 reactions [49].

In spite of the interest in applying information theory (IT) measures to electronic structure [50], their effectiveness as descriptors for characterizing IRC stationary points (TS, equilibrium geometries) and bond breaking/forming regions remained unclear. Indeed, proper analysis of IT descriptors (Ref [24]) unveil the phenomenological behavior of elementary reactions by following IRCs, analyzing density behavior in position/momentum spaces near the TS and bond breaking/forming regions not visible in the energy profile using Shannon entropies. Charge density descriptors like the molecular electrostatic potential (MEP), density functional theory hardness/softness will link density changes to information quantities during reactions.

The chemical probes under study are the simplest hydrogen abstraction reaction

The central quantities under study are the Shannon entropies in position and momentum spaces [1]:

where ρ(r) and γ(p) denote the molecular electron density distributions in the

position and momentum spaces each normalized to unity. In the

independent-particle approximation, the total density distribution in a molecule

is a sum of contribution from the electrons in each of the occupied orbitals.

This is the case in both r-space and p-space,

position and momentum respectively. In momentum space, the total electron

density,

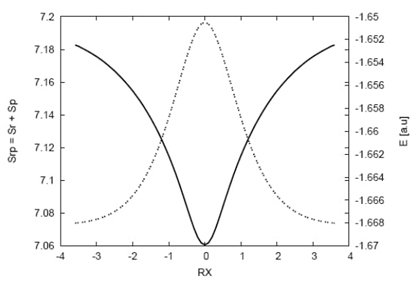

The Shannon entropy in position space Sr behaves like a measure of delocalization or lack of structure of the electronic density in the position space and hence Sr is maximal when knowledge of ρ(r) is minimal and becomes delocalized. The Shannon entropy in momentum space Sp is largest for systems with electrons of higher speed (delocalized γ(p)) and is smaller for relaxed systems where kinetic energy is low. Entropy in momentum space Sp is closely related to Sr by the uncertainty relation of Bialynicki-Birula and Mycielski [53], which shows that the entropy sum ST=Sr+Sp, is a balanced measure and cannot decrease arbitrarily. For one-electron atomic systems it may be interpreted as that localization of the electron’s position results in an increase of the kinetic energy and a delocalization of the momentum density, and conversely.

Regarding the behavior of the Shannon entropy discussed, a simple explanation is as follows: Since there is no variational principle for quantum mechanical properties besides the energy, directly relating the Shannon entropy to the transition state seems impractical. However, the plausible argument is that the transition state should correspond to a highly localized momentum distribution and delocalized position distribution per the uncertainty principle. This localized momentum would represent minimum kinetic energy as per classical Mechanics. The main points are: a) The highly localized momentum/delocalized position at the transition state corresponds to minimum kinetic energy, as understood by classical Mechanics, and b) this highly localized momentum/delocalized position corresponds to the minimum kinetic energy in the classical understanding.

To summarize, the highly localized momentum in the classical understanding represents the minimum kinetic energy, which matches the arguments of minimum kinetic energy in the classical understanding. The key distinction is that the highly localized momentum represents the minimum kinetic energy in the classical interpretation/understanding, which is consistent with the high degree of momentum localization discussed. The difference is the classical interpretation/understanding of the localized momentum representing minimum kinetic energy.

The MEP represents the molecular potential energy of a proton at a particular location near a molecule [54], say at nucleus A. Then the electrostatic potential, VA, is defined as

where ρ(r) is the molecular electron density and ZA is the nuclear charge of atom A, located at RA. Generally speaking, negative electrostatic potential corresponds to an attraction of the proton by the concentrated electron density in the molecules from lone pairs, pi-bonds, etc… (coloured in shades of red in standard contour diagrams). Positive electrostatic potential corresponds to repulsion of the proton by the atomic nuclei in regions where low electron density exists and the nuclear charge is incompletely shielded (coloured in shades of blue in standard contour diagrams).

We have also evaluated some reactivity parameters that may be useful to analyze the chemical reactivity of the processes. Parr and Pearson, proposed a quantitative definition of hardness (() within conceptual DFT [55]:

where

where I and A, are the ionization potential (IP) and electron affinity (EA), respectively. Applying Koopmans’ theorem [56], Eq. (4) can be written as:

where ( denotes the frontier molecular orbital energies. In general terms, hardness and softness are good descriptors of chemical reactivity, the former measures the global stability of the molecule (larger values of η means less reactive molecules), whereas the S index quantifies the polarizability of the molecule [57], thus soft molecules are more polarizable and possess predisposition to acquire additional electronic charge [58]. The chemical hardness “η” is a central quantity for use in the study of reactivity and stability, through the hard and soft acids and bases principle [59]. However, in many cases, the experimental electron affinity is negative rather than positive, an such systems pose a fundamental problem; the anion is unstable with respect to electron loss and cannot be described by a standard DFT ground-state total energy calculation. To circumvent this limitation, Tozer and De Proft have introduced an approximate method to compute this quantity, requiring only the calculation of the neutral and cationic systems which does not explicitly involve the electron affinity [60]:

where I is obtained from total electronic energy calculations on the N-1 and N

electron systems at the neutral geometry

The electronic structure calculations were conducted using the Gaussian 03 software suite [62]. Reported transition state (TS) geometries from previous studies were utilized for the abstraction [62] and SN2 exchange reactions [63]. Internal reaction coordinate (IRC) calculations [64] along the forward and reverse reaction paths were performed at the MP2 level of theory (UMP2 for the abstraction reaction), with a minimum of 35 points along each IRC path direction. A high level of theory and well-balanced basis set, incorporating diffuse and polarized orbitals, were selected for computing all properties of the structures along the IRC path. The chemical hardness and softness parameters were evaluated using Eqs. (6) and (7) with the standard hybrid B3LYP functional (UB3LYP for the abstraction reaction) [60].

Molecular vibrational frequencies, derived from the eigenvalues of the Hessian matrix (whose elements are associated with force constants) at the stationary point nuclear coordinates, were calculated. Notably, the values corresponding to the normal mode at the TS (exhibiting one imaginary frequency or negative force constant) were determined analytically at all points along the IRC path using the MP2 level of theory (UMP2 for the abstraction reaction) [64]. The molecular information entropies in position and momentum spaces along the IRC path were obtained using in-house software incorporating 3D numerical integration routines [65], along with the DGRID program suite [66]. The bond breaking/forming regions, as well as electrophilic/nucleophilic atomic regions, were evaluated through the molecular electrostatic potential (MEP) using the MOLDEN software [67]. Unless stated otherwise, atomic units were employed throughout the study.

Radical abstraction reaction

The reaction

For this reaction, calculations were performed at two different levels of theory. The internal reaction coordinate (IRC) was obtained at the UMP2/6-311G level, while all properties along the IRC path were computed at the QCISD(T)/6-311++G** level. As a result of the IRC calculation, 72 points were evenly distributed between the forward and reverse directions of the reaction. A relative tolerance of 10-5 was set for the numerical integrations [66].

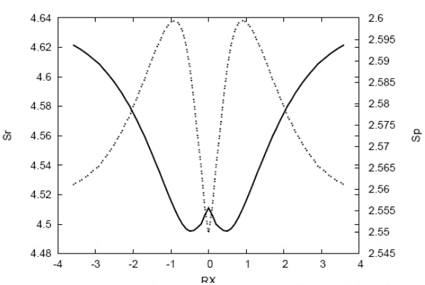

Fig. 1 Total energy values (dashed line) in a.u. and the entropy sum (solid line) for the IRC path of the hydrogenic abstraction reaction.

The energy profile and entropy sum along the intrinsic reaction coordinate (IRC) path for the abstraction reaction are shown in Figure 1. The energy profile exhibits symmetric behavior, while the entropy sum (TS) shows the opposite trend. The saddle point structure has a localized density in the combined position and momentum space (phase space), corresponding to a more delocalized position density with the lowest kinetic energy among the nearby structures along the IRC path. This suggests that the saddle point can be characterized by information theory (IT) in the entropy hyper-surface.

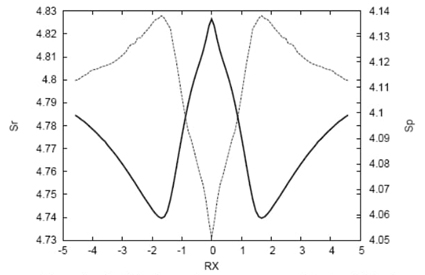

Fig. 2 Shannon entropies in position (solid line) and momentum (dashed line) spaces for the IRC path of the hydrogenic abstraction reaction.

Fig. 2 presents the Shannon entropies in position and momentum spaces along the IRC path. The position entropy has a local maximum at the transition state (TS) and two minima in its vicinity, while the momentum entropy shows the opposite behavior with a minimum at the TS and two maxima nearby. As the intermediate radical approaches the molecule, the position entropy decreases towards the TS region, indicating that the densities of the chemical structures are more localized in this region, where important chemical changes occur. In contrast, the momentum entropy increases near the TS, which is linked to a more localized momentum density with the lowest kinetic energy (corresponding to the maximum on the potential energy surface). At the reactive complex regions (towards reactants and products), the momentum entropy and kinetic energy values are higher compared to the TS, reproducing the typical potential energy surface shown in Fig. 1.

In summary, the entropy and energy profiles provide valuable insights into the chemical changes occurring along the IRC path, with the TS region characterized by localized densities in position space and delocalized densities in momentum space, reflecting the lowest kinetic energy and the highest potential energy at the saddle point.

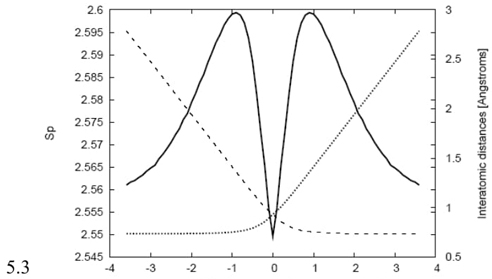

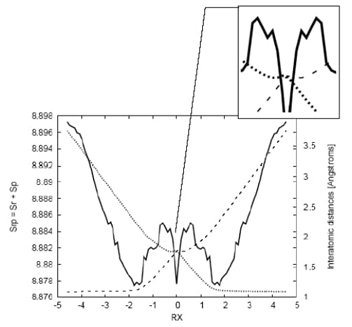

Fig. 3 Shannon entropy in momentum space (solid line) and the bond distances R(H0-Hin) (dashed line for the entering hydrogen) and R(H0-Hout) (dotted line for the leaving hydrogen) in Angstroms for the IRC path of the hydrogenic abstraction reaction.

Fig. 3 illustrates the bond distances between the entering/leaving hydrogen radicals and the central hydrogen atom along the reaction path. The plot clearly indicates that in the vicinity of the transition state (TS), a bond breaking and forming process is taking place. On the right side of the TS, the bond distance Rin is elongating, signifying the breaking of the bond. Simultaneously, on the left side of the TS, the bond distance Rout is stretching, indicating the formation of a new bond. Interestingly, the chemical process does not occur in a concerted manner. Instead, the reaction follows a two-step mechanism. First, homolytic bond cleavage takes place, and then the molecule stabilizes by forming the TS structure. This stepwise process is evident in Fig. 3. As the incoming radical approaches the molecule, the bond breaks at the same location where the position entropy reaches its minimum value, and the momentum entropy attains its maximum. This observation aligns with the previous discussion regarding the localized density in position space and delocalized density in momentum space at the TS region. After the bond cleavage and TS formation, the new molecule is formed, completing the reaction process. The analysis of bond distances and the entropy profiles supports the two-step mechanism characterizing this abstraction reaction.

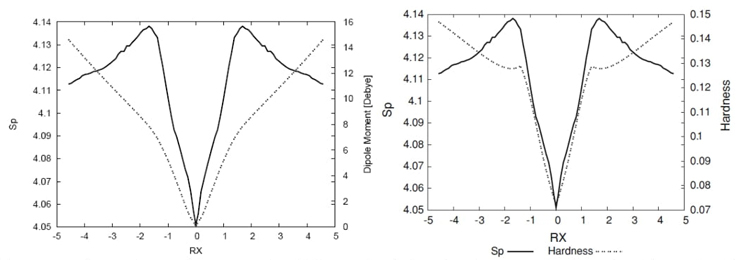

Fig. 4 Shannon entropy in momentum space (solid line), the dipole moment values in Debyes (dashed line), and the hardness values (dashed line) for the IRC path ofthe hydrogenic sbstraction reaction.

In Fig. 4, the non-polar bond pattern characteristics of homolytic bond-breaking reactions are studied by examining the dipole moment and momentum entropy along the intrinsic reaction coordinate (IRC) path [24]. The dipole moment is zero at the transition state (TS) and when approaching reactants/products, reflecting the non-polar nature of the molecule in these regions. However, the molecular densities undergo significant distortion near the TS, where position entropies are minimal, indicating a rigid molecular geometry with localized position density at the bond breaking/forming regions. The momentum entropy maxima reveal that the energy reservoirs for bond cleavage, referred to as bond cleavage energy reservoirs (BCER), occur earlier or later along the IRC path, depending on the reaction direction. The interplay between dipole moment, position entropy, and momentum entropy provides insights into the complex nature of the reaction and the critical role of the TS and BCER regions in the overall mechanism. Also, in Fig. 4 we have depicted the hardness values along with the momentum space entropy for comparison purposes. From a DFT conceptual point of view we may interpret Fig. 4 as that chemical structures at the maximal hardness (minimal softness) values possess low polarizability and hence are less propense to acquire additional charge (less reactive). According to considerations discussed above, these structures are found at the BCER regions, i.e., they are maximally distorted, with highly localized position densities (minimal position entropies, and maximal dipole moment values, (see Fig. 4). In contrast, hardness values are maximal at the reactant/product complex regions which correspond with delocalized position densities with null dipole moments, hence they are more prone to react (more reactive). At the TS, a local minimum for the hardness may be observed, then it is locally more reactive and leading to acquire charge since its dipole moment is null. Accordingly, the TS structure is more relaxed and with a more delocalized density.

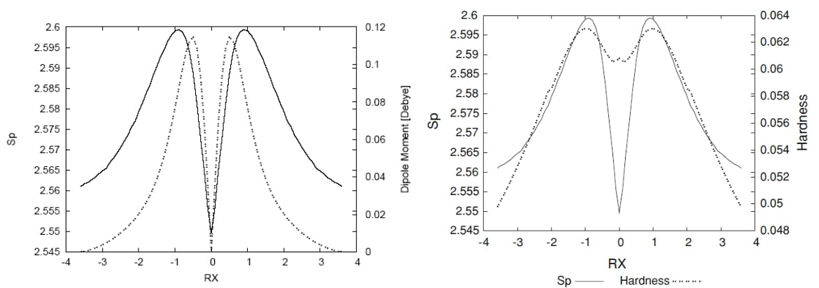

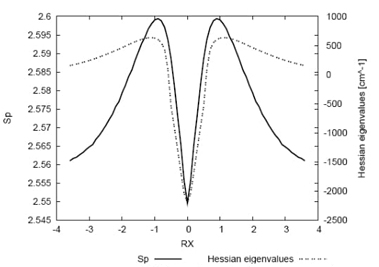

Fig. 5 Shannon entropy in momentum space (solid line) and the

eigenvalues of the Hessian (dashed line) for the IRC path of

In Fig. 5, the eigenvalues of the Hessian matrix for the normal mode associated with the transition state (TS) are plotted along the reaction path, together with the momentum entropy values for comparison. These Hessian values signify the transition vector "frequencies," exhibiting maxima in the vicinity of the TS and a minimum value at the TS itself. Several noteworthy aspects emerge from this analysis. Firstly, the TS indeed represents a saddle point, with the Hessian maxima corresponding to high kinetic energy values (largest "frequencies" for the energy cleavage reservoirs), aligning with the peak values in the momentum entropy profile. Conversely, the Hessian is minimal at the TS, where the kinetic energy is the lowest (minimal molecular frequency), coinciding with a minimal momentum-entropy value. Additionally, the transition state of a reaction is typically characterized by the presence of a negative force constant for one normal vibrational mode, associated with an imaginary frequency. The pioneering work of Zewail and Polanyi in transition state spectroscopy has introduced the concept of a reaction possessing a continuum of transient states, referred to as a transition region, rather than a single transition state [38,68]. Remarkably, the findings of the current study provide evidence for the existence of such a region between the bond cleavage energy reservoirs (BCER) situated before and after the TS. This is in agreement with reaction force, F(R), studies [48] where the reaction force constant, κ(R), also reflects this continuum, showing it to be bounded by the minimum and the maximum of F(R), at which κ(R) = 0.

The reactivity characteristics of the reaction have also been explored using density-based descriptors, such as hardness and softness, within the framework of conceptual density functional theory (DFT) [24]. According to this approach, chemical structures exhibiting maximum hardness (and, consequently, minimum softness) have low polarizability, making them less likely to accept additional charge and, thus, less reactive. Interestingly, these structures are located in the bond cleavage energy reservoir (BCER) regions, where they are maximally distorted and possess highly localized position densities, see Fig. 6 in Ref [24].

Hydrogenic identity SN2 exchange reaction

In the study of elementary chemical reactions, analyzing a typical nucleophilic substitution (SN2) reaction is of particular interest due to its single-step process, unlike the two-step SN1 reaction. The anionic SN2 mechanism, depicted as Y− + RX → RY + X−, is second-order kinetics, being first order in both the nucleophile (Y−) and the substrate (RX, where X− is the leaving group). For identity SN2 reactions, X=Y. The observed second-order kinetics result from the Walden inversion transition state, where the nucleophile displaces the leaving group from the backside in a single, concerted reaction step. Evidence indicates that this reaction mechanism is characterized by synchronous and concerted behavior [25].

The

Fig. 6 Shannon entropies in position (solid line) and momentum (dashed line) spaces for the IRC path of the SN2 reaction at the QCISD(T)/6-311++G** level.

A comparison between the entropy sum (Fig. 8 in Ref [24]) and the energy reveals contrasting behaviors, with the entropy sum exhibiting much more structure near the transition state (TS) region compared to the energy profile [24]. The nature of the richer structure observed for the entropy sum, in contrast to the energy, is elucidated through the position and momentum entropies (illustrated in Fig. 6). These show a TS structure characterized by a delocalized position density and a localized momentum density, indicating a structurally relaxed configuration with low kinetic energy. In contrast, towards the reactive complex and reactants/products, the position densities are more localized with less localized momentum densities, signifying structurally distorted chemical configurations with higher kinetic energy compared to the TS. Around |RX| ≈ 1.7, critical points for both entropies are observed, with minima/maxima for the position/momentum entropies, respectively. Thus, ionic complexes in these regions are characterized by highly localized position densities and highly delocalized momentum densities, along with high kinetic energies, suggesting that these regions correspond to bond critical energy regions (BCER) where bond breaking may initiate. Two additional features are noteworthy: both entropies exhibit inflection points at |RX| ≈ 1.0 and maxima at |RX| ≈ 0.5. These are regions where the entropy sum displays more defined structure, with changes in curvature and maxima, respectively [24]. Further exploration of these observations in connection with other properties will be conducted later.

Fig. 7 Shannon entropy in momentum space (solid line) and the bond distance Ra (dotted line), corresponding to the Ha-C distance, and Rb (dashed line) corresponding to the (C-Hb) distance for the IRC path of the SN2 reaction. In the side frame: detail of the minima observed for the bond distances at RX ≈ -0.3. Distances in Angstroms.

To support our previous observations, we find it instructive to plot the distances between the incoming hydrogen (Ha) and the leaving hydrogen (Hb) in Fig. 7. These distances exhibit stretching and elongation features associated with bond forming and breaking situations, as anticipated. In contrast to the previously analyzed abstraction reaction, the SN2 reaction occurs in a concerted manner, where bond breaking and forming start simultaneously in a gradual and more intricate manner, as explained below. An interesting feature observable from Fig. 7 is that while the elongation of the carbon-nucleofuge (C-Hb) bond (Rb) changes its curvature significantly at RX ≈ -1.7 (in the forward direction of the reaction), the stretching of the nucleophile-carbon (Ha-C) bond (Ra) occurs smoothly. This suggests that bond breaking occurs first due to the repulsive forces exerted by the ionic molecule as the nucleophile approaches, causing the carbon-nucleofuge bond to break as the molecule begins to release its kinetic energy (resulting in a decrease of momentum entropy). In this sense, the reaction occurs in a concerted manner, with bond breaking and dissipating energy processes occurring simultaneously. In the vicinity of the transition state (TS), around RX ≈ -0.3, we observe small changes in both interatomic distances, as revealed through minima in the amplified picture, indicating the presence of repulsive forces at the TS. Moreover, we analyze the internal angle between Ha-C-H along with the Shannon entropy in position space for comparison purposes. The internal angle clearly indicates that the molecule begins to undergo the "inversion of configuration" at around RX ≈ -1.7, where the nucleophile displaces the nucleofuge from the backside in a single concerted reaction step. This process initiates at the BCER regions, as mentioned above.

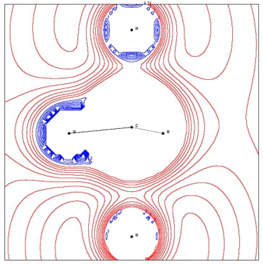

Fig. 8 The MEP contour lines in the plane of Ha-C-Hb (Ha stands for the nucleophilic atom and Hb is the nucleofuge, on bottom and top, respectively) showing positive MEP (nucleophilic regions) and negative MEP (electrophilic regions) at RX ≈ -0.3 for the SN2 reaction.

Fig. 8 illustrates the repulsive effect mentioned earlier in relation to the interatomic distances (Fig. 7), highlighting that the leaving atom Hb gains nucleophilic power (negative MEP). Although not depicted, the transition state (TS) exhibits a half-and-half electrophilic/nucleophilic character among the atoms, with the charge evenly distributed throughout the molecule.

Fig. 9 Shannon entropy in position space (solid line), the total dipole moment values in Debyes (dotted line), and the and the hardness values (dashed line) for the IRC path of the SN2 reaction.

The SN2 reaction serves as an excellent probe to examine the polar

bond pattern characteristic of heterolytic bond-breaking, with residual ionic

attraction due to the ionic nature of the products. This characteristic should

be reflected through the dipole moment of the molecules along the IRC path (note

that the origin of the coordinate system is placed at the molecule's center of

nuclear charge). This is indeed observed in Fig.

9, where these values, along with the ones of the momentum entropy,

are depicted for comparison purposes. At the transition state (TS), the dipole

moment is zero, indicating the nonpolar character of the TS structure, with both

nucleophile and nucleofuge atoms evenly repelling each other through their

carbon bonding. At this point, the momentum and position entropies are minimal

and maximal, respectively, reflecting the low kinetic energy feature of the

chemically relaxed TS structure. As the reactive complex approaches the

reactants/products regions, the dipole moment increases monotonically,

reflecting the polar bonding character of the ionic complex, with a significant

change of curvature at the TS vicinity at around |RX| ≈ 1.0 (a change

of curvature was already noted for all entropies at the same region). In the

transition from reactants to products, the inversion of the dipole moment values

clearly reflects the inversion of configuration of the molecule (this reaction

begins with a tetrahedral

It is interesting to note that as in the case of the hydrogenic abstraction reaction, the eigenvalues of the Hessian [24] for the normal mode associated with the TS along the IRC path show maxima at the BCER and reach their minimal value at the TS. This again validates the concept of a continuum of transient of Zewail and Polanyi , i.e., a transition region rather than a single transition state [38,70].

The effectiveness of theoretic-information measures of the Shannon type in characterizing elementary chemical reactions has been evaluated. The transition state (TS) regions of both chemical reactions were identified, and a plausible connection between the TS and the Shannon entropies was provided and numerically verified. Furthermore, through these chemical probes, we observed basic chemical phenomena of bond breaking and forming, demonstrating that Shannon measures are highly sensitive in detecting these events. Additionally, while the transition state of a reaction is typically identified by the presence of a negative force constant for one normal vibrational mode corresponding to an imaginary frequency, the work of Zewail and Polanyi [70] in transition state spectroscopy has introduced the concept of a reaction having a continuum of transient, a transition region rather than a single transition state. It is noteworthy that the results of the present study indeed demonstrate the existence of such a region between the bond critical energy regions (BCER), before and after the TS. This finding is consistent with reaction force (F(R)) studies, where the reaction force constant (κ(R)) also reflects this continuum, bounded by the minimum and maximum of F(R), at which κ(R) = 0. Results of the study have been reported in Ref. [24].

3D complexity analysis of the hydrogenic abstraction reaction

In recent years, there has been a growing interest in applying complexity concepts to the study of physical, chemical, and biological phenomena. Complexity measures are generally understood as indicators of pattern, structure, and correlation within systems or processes. Several mathematical approaches have been proposed to quantify complexity and information, including Kolmogorov-Chaitin or algorithmic information theory [71], Shannon and Weaver's classical information theory [72], Fisher information [58,73], logical depth [74], and thermodynamical depth [75]. The definition of complexity is not universally agreed upon, and its quantitative characterization has been a significant research focus, receiving considerable attention [76,77]. The utility of each complexity definition depends on the type of system or process under study, the level of description, and the scale of interactions among particles, atoms, molecules, biological systems, etc. Fundamental concepts like uncertainty or randomness are often employed in complexity definitions, but other concepts such as clustering, order, localization, or organization might also play important roles in characterizing system complexity. It is unclear how these concepts should be integrated to quantitatively assess system complexity. However, recent proposals have formulated complexity as a product of two factors, considering order/disequilibrium and delocalization/uncertainty. For instance, the López-Mancini-Calbet (LMC) shape complexity measure [76-79] satisfies boundary conditions by reaching minimal values in extremely ordered and disordered limits. The LMC measure is constructed as the product of two significant information-theoretic quantities: disequilibrium D (also known as self-similarity [80] or information energy [81]), which quantifies the deviation of the probability density from uniformity [79,82] (equiprobability), and Shannon entropy S , which measures randomness/uncertainty in the probability density [72] and quantifies the departure from localizability. Both global quantities are closely related to the spread of a probability distribution.

The Fisher-Shannon product (FS) has been used as a measure of atomic correlation [83] and defined as a statistical complexity measure [84]. The product of power entropy J -explicitly defined in terms of Shannon entropy-and Fisher information measure I combines global characteristics (depending on the distribution as a whole) with local characteristics (related to the gradient of the distribution), preserving general complexity properties. Unlike LMC complexity, which relies on disequilibrium to measure global deviation from uniformity, Fisher-Shannon complexity uses Fisher information to quantify local distribution disorder [73,85].

We have undertaken an information-theoretical complexity study of the hydrogenic abstraction reaction [29] by use of information-theoretical measures and planes as well as the LMC and FS complexity products. The recognition of patterns of uncertainty/localizability, disorder/narrowness and disequilibrium/uniformity, were characterized, through the S, I and D functionals, respectively.

Complexities

The LMC complexity is defined through the dyadic product of information-theoretic measures. So that, in position space, the probability density ρ(r), is employed to obtain the C(LMC) complexity [76-79]:

where D r is the disequilibrium information measure [80,81]

and S is the Shannon entropy [72], Eq (1):

from which the exponential entropy

It is important to mention that the LMC complexity of a system must comply with the following lower bound [84]:

The FS complexity in position space, C r (FS), and similarly in momentum space, C p (FS), are defined in terms of the product of Fisher information I [73,85] and the power entropy [86], J, (See below),:

in position space and

in momentum space, where ρ(r) and γ(p) denote the normalize-to-unity electron density distributions in the position and momentum spaces, respectively. The power entropy [86] in position space, J r , is defined as

and similarly for J p in momentum space. Both depending on the Shannon entropy defined in Eq. (1). So that, the FS complexity in position space is given by

and similarly

in momentum space.

Let us remark that the factors in the power Shannon entropy J are chosen to preserve the invariance under scaling transformations, as well as the rigorous relationship [87].

with n being the space dimensionality, thus providing a universal lower bound to FS complexity. The definition in Eq. (18) corresponds to the particular case n=3, the exponent containing a factor 2/n for arbitrary dimensionality.

Note that the inequalities above remain valid for distributions normalized to unity, which is the choice that it is employed throughout this work for the 3-dimensional molecular case.

Aside of the analysis of the position and momentum information measures, we have

defined these magnitudes in the product rp-space, characterized

by the probability density

And

From the above two equations, it is evident that the features and patterns of both LMC and FS complexity measures in the product space will be determined by those of each conjugated space. However, the numerical analyses conducted in the next section reveal that the momentum space contribution plays a more significant role compared to the one in position space.

3D complexity analysis of the hydrogenic abstraction reaction

The electronic structure calculations performed in the present study were carried out with the Gaussian 03 suite of programs [62]. Reported TS geometrical parameters for the abstraction reaction were employed [64]. Calculations for the IRC were performed at the MP2 (UMP2 for the abstraction reaction) level of theory with at least 35 points for each one of the directions (forward/reverse) of the IRC. Next, a high level of theory and a well-balanced basis set (diffuse and polarized orbitals) were chosen for determining all of the properties for the chemical structures corresponding to the IRC. Thus, the QCISD(T) method was employed in addition to the 6-311++G** basis set, unless otherwise stated. The molecular information measures S, D, I, J; the information planes (D-L), (I-J), (I-D) and the complexity measures, C(LMC) and C(FS). All information-theoretical quantities are calculated in position and momentum spaces for the IRC path of the abstraction reaction and obtained by employing software developed in our laboratory along with 3D numerical integration routines [66], and the DGRID suite of programs [67]. The IRC represents a minimum energy reaction pathway (MERP) resembling a chemical road crossing through a 3D horse saddle, forming an energy hypersurface. We constructed such a 3D-saddle surface by extending the internal coordinates of the three-hydrogen complex Ha ··· H b ··· H c in all directions using an arbitrary equidistant grid ranging from 00.5a.u. ≤ R 12 ≤ 3.35a.u. vs. 0.5a.u. ≤ R 13 ≤ 3.35 a.u. in steps of 0.05 a.u., far beyond the IRC. Once the grid was formed, a 3D information-theoretical analysis of the hydrogenic abstraction reaction H • a + H 2 ⇋ H 2 + H • was conducted using information-theoretical measures and the dyadic products of statistical complexity (LMC and FS). The focus was set on recognizing 3D patterns of localizability, disorder, and uniformity through the hypersurfaces of S, I, and D, respectively. This is achieved by calculating the 3D surfaces of the IT components, along with the Fisher-Shannon and LMC complexities, in both position ( r ) and momentum ( p ) spaces. The diagonal path of the IT-functionals hypersurfaces will be examined in light of the equidistant dissociation of the three-atomic complex molecule at the TS H a ··· H b ··· H c .

Understanding the structural characteristics of distributions in both position and momentum spaces, particularly in terms of global spreading (delocalization) of densities, can be achieved through Shannon entropies in conjugated spaces. However, Fisher information is more suitable for describing the behavior of densities concerning their local changes [73,85]. This measure evaluates the pointwise concentration of the electronic probability cloud in each space by examining the gradient of the electron distribution. This allows it to reveal changes in density and provide a quantitative assessment of its oscillatory nature (smoothness).

It's crucial to note that the global or local nature of these information measures means that each can only partially describe the complete chemical phenomena. These phenomena include identifying regions such as reactant/product complexes (R/P), bond breaking/forming regions (B-B/F), bond cleavage energy reservoirs (BCER), transition states (TS), and mechanistic behaviors. Therefore, to fully understand a chemical process, a complexity analysis is essential. These information-theoretical measures offer complementary perspectives: disequilibrium (D) quantifies deviations from uniformity, while Shannon entropy (L) measures deviations from localizability, as described by the C(LMC) complexity measure. In a similar vein, Fisher information (I) assesses deviations from disorder, and power entropy (J) evaluates deviations from localizability, forming the basis of the C(FS) measure (Eqs. 8, 11, 16, and 17).

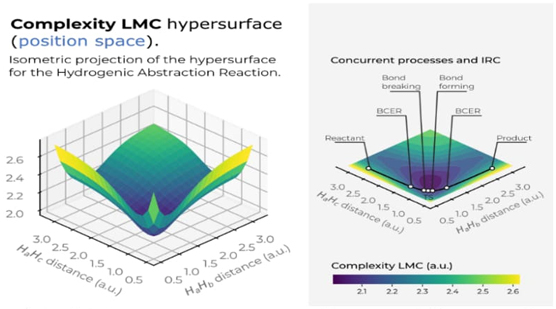

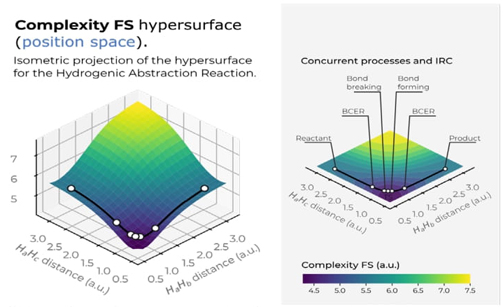

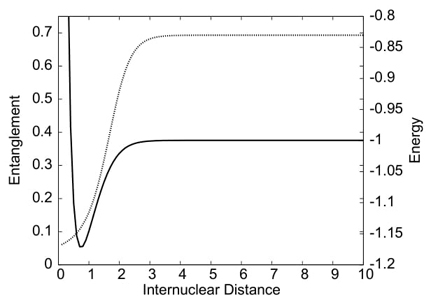

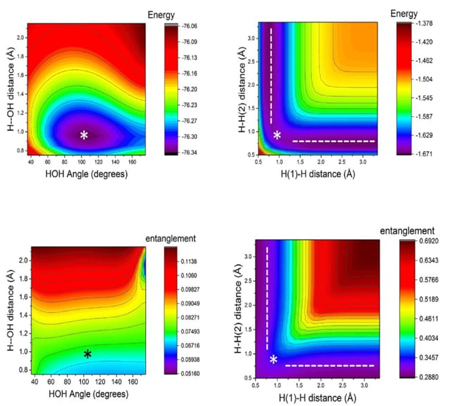

In Fig. 9, we have depicted the LMC complexity surface in 3D in position space as a function of the internal coordinates R 12 and R 13. A top view of the hypersurface of the C LMC complexity measure in the X-Y plane is also shown. Similarly, Figure 10 presents the C FS complexity measure, both figures indicating the IRC pathway of the reaction along with the chemically significant zones: R/P, B-B/F, BCER, and TS. The color codes represent lower to higher complexity values, ranging from bluish to yellowish.

Fig. 9 Hypersurface of the LMC complexity measure in position space (left) and top view of its 3D surface in the X−Y plane (right) for the hydrogenic abstraction reaction in the grid of the internal coordinates, R 12 and R 13, of the three hydrogenic complex H a ··· H b ··· H c . Color codes indicate lower to higher LMC-complexity values running from yellowish to bluish ones, respectively.

Fig. 9 displays a deep narrow well that encompasses all the crucial regions of the reaction, where concurrent phenomena occur, such as the B-B/F, BCER, and TS regions. This suggests that significant chemical changes happen in regions of lower LMC complexity (greenish areas in Fig. 9). It would be intriguing to further explore the chemical implications of this low-complexity well, which might indicate a boundary delimiting structurally stable molecules. Notably, Fisher's hypersurface (Fig. 5 in Ref [90]) reveals a similar region, despite being a different type of functional compared to the global ones used to represent C LMC This indicates that both local and global IT functionals detect this low-complexity boundary. Therefore, the deep narrow well likely represents a chemically significant boundary. Further, the 3D surface of C LMC indicates that higher LMC-complexity regions are noticed at the dissociated complex species, i.e., (H a ··· H b ··· H c )· → H 2 + H · c at very large R bc , (H a ··· H b ··· H c )· → H · a + H 2 at very large R ab , and (H a ··· H b ··· H c )· → (H a + H b + H c )· at very large R aBocbc . This observation allow us to conclude the higher complex behavior of the dissociated species.

Fig. 10 Hypersurface of the Fisher−Shannon complexity measure in position space (left) and top view of its 3D surface in the X−Y plane (right) for the hydrogenic abstraction reaction in the grid of the internal coordinates, R 12 and R 13, of the three hydrogenic complex H a ··· H b ··· H c . Color codes indicate lower to higher Fisher−Shannon complexity values running from yellowish to bluish ones, respectively.

Fig. 10 illustrates a deep narrow slope at small distances of the internal coordinates R 12 and R 13, signifying a low Fisher-Shannon complexity C FS region. This region interestingly also encloses the chemically significant zones where concurrent phenomena occur, such as B-B/F, BCER, and TS, similarly to the LMC complexity depicted in Fig. 9. Additionally, higher Fisher-Shannon complexity regions are found in the dissociation zone of this complex radical molecule: (H a ··· H b ··· H c )· → (H a + H b + H c )· at very large R abc , which contrasts with the LMC complexity that shows higher values for all types of dissociated species. The boundaries of low-complexity regions for both measures, C LMC and C FS , enclose complex radical molecules with lower complexity values than the TS, as seen in Figures 9 and 10. This observation is chemically significant and warrants further investigation. Furthermore, an interesting observation from these figures is that the hypersurfaces for both complexities in position space fold around the TS region, revealing an attractor-like spatial zone.

The combined analyses of the 3D structure of the information-theoretic (IT) functionals demonstrate that the chemically significant regions at the onset of the TS are thoroughly characterized by aspects of localizability (S), uniformity (D), and disorder (I) . Additionally, novel regions of low complexity suggest new boundaries for chemically stable complex molecules. The study further reveals that the chemical reaction occurs in low-complexity regions where concurrent phenomena such as bond-breaking/forming (B-B/F), bond-cleavage energy reservoirs (BCER), spin-coupling (SC), and the transition state (TS) take place. The complexity measures in both spaces display folded hypersurfaces around the TS region, indicating attractor-like spatial/momentum zones. Moreover, the focus has been on the diagonal part of the hypersurface of the IT functionals, beyond the IRC path itself, to analyze the dissociation process of the triatomic transition-state complex, revealing additional interesting features of the bond-breaking (B-B) processThe material presented in this Section has been recently published (Ref. [90]).

Atoms and molecules

The Fisher-Shannon and LMC shape complexities, along with the Shannon-disequilibrium, Fisher-Shannon, and Fisher-disequilibrium information planes, which comprise two localization-delocalization factors, have been recently computed in both position and momentum spaces for the one-particle densities of 90 selected molecules of various chemical types [91]. It was found that while the two measures of complexity show only general trends, the localization-delocalization planes exhibit distinct chemically significant patterns. Several molecular properties (energy, ionization potential, total dipole moment, hardness, electrophilicity) were analyzed to interpret and understand the chemical nature of the composite information-theoretic measures. The results indicate that these measures can detect not only randomness or localization but also pattern and organization.

For larger molecules, Shannon Information Theory (IT) has been applied to analyze the growth behavior of nanostructures [92-94].

Some other topics of interest have been included in this part of the Review: (i) predominant information quality schemes (PIQS) of amino acids [95] , (ii) 3D information-theoretic analyses of the chemical space from simple atomic and molecular systems to biological and pharmacological molecules [96], and (iii) the separability problem of atoms in molecules [97].

Complexity and information plans of selected molecules

Following the complexity concepts discussed above, we conducted an information-theoretical analysis of ninety molecular systems of various chemical types to analyze and quantify their information content [91]. The focus was on recognizing patterns of uncertainty, order, and organization by employing several molecular properties such as energy, ionization potential, hardness, and electrophilicity. We computed the information components, as well as the Fisher-Shannon and LMC complexities, C(FS) and C(LMC), respectively (Eqs 8 and 16). These information functionals of the one-particle density were computed in position ( r ) and momentum ( p ) spaces, as well as in a joint product space ( rp ) that contains more comprehensive information about the system. Eqs. (19) and (20). Additionally, the Fisher-Shannon (I-J) and the disequilibrium-Shannon (D-L) planes were studied to identify patterns or organization.

These complexity measures and their associated informational planes were analyzed in terms of their chemical properties, number of electrons, and geometrical features. The molecular set chosen for this study includes a variety of chemical organic and inorganic systems, such as aliphatic compounds, hydrocarbons, aromatics, alcohols, ethers, and ketones. This set represents a range of closed-shell systems, radicals, isomers, and molecules containing heavy atoms such as sulfur, chlorine, magnesium, and phosphorus. The geometries needed for the single-point energy calculations were obtained from the Computational Chemistry Comparison and Benchmark Database from NIST [98]. The molecular set can be organized into isoelectronic groups as follows:

N-10: NH 3 (ammonia),

N-12: LiOH (lithium hydroxide),

N-14: HBO (boron hydride oxide), Li 2 O (dilithium oxide),

N-15: HCO (formyl radical), NO (nitric oxide),

N-16; H 2 CO (formaldehyde), NHO (nitrosyl hydride), O 2 (oxygen),

N-17: CH 3 O (methoxy radical),

N-18: CH 3 NH 2 (methyl amine), CH 3 OH (methyl alcohol), H 2 O 2 (hydrogen peroxide), NH 2 OH (hydroxylamine),

N-20: NaOH (sodium hydroxide),

N-21: BO 2 (boron dioxide), C 3 H 3 (radical propargyl), MgOH (magnesium hydroxide), HCCO (ketenyl radical),

N-22: C 3 H 4 (cyclopropene), CH 2 CCH 2 (allene), CH 3 CCH (propyne), CH 2 NN (diazomethane), CH 2 CO (ketene), CH 3 CN (acetonitrile), CH 3 NC (methyl isocyanide), CO 2 (carbon dioxide), FCN (cyanogen fluoride), HBS (hydrogen boron sulfide), HCCOH (ethynol), HCNO (fulminic acid), HN 3 (hydrogen azide), HNCO (isocyanic acid), HOCN (cyanic acid), N 2 O (nitrous oxide), NH 2 CN (cyanamide),

N-23: NO 2 (nitrogen dioxide), NS (mononitrogen monosulfide), PO (phosphorus monoxide), C 3 H 5 (allyl radical), CH 3 CO (acetyl radical),

N-24: C 2 H 4 O (ethylene oxide), C 2 H 5 N (aziridine), C 3 H 6 (cyclopropane), CF 2 (difluoromethylene), CH 2 O 2 (dioxirane), CH 3 CHO (acetaldehyde), CHONH 2 (formamide), FNO (nitrosyl fluoride), H 2 CS (thioformaldehyde), HCOOH (formic acid), HNO 2 (nitrous acid) NHCHNH 2 (aminomethanimine), O3 (ozone), SO (sulfur monoxide),

N.25: CH 2 CH 2 CH 3 (npropyl radical), CH 3 CHCH 3 (isopropyl radical), CH 3 OO (methylperoxy radical), FO 2 (dioxygen monofluoride), NF 2 (difluoroamino radical), CH 3 CHOH (ethoxy radical), CH 3 S (thiomethoxy),

N-26: C 3 H 8 (propane), CH 3 CH 2 NH 2 (ethylamine), CH 3 CH 2 OH (ethanol), CH 3 NHCH 3 ( dimethylamine), CH 3 OCH 3 (dimethyl ether), CH 3 OOH (methyl peroxide), F 2 O (difluorine monoxide),

N-30: ClCN (chlorocyanogen), OCS (carbonyl sulfide), SiO 2 (silicon dioxide),

N-31: PO 2 (phosphorus dioxide), PS (phosphorus sulfide),

N-32: ClNO (nitrosyl chloride), S 2 (sulfur diatomic), SO 2 (sulfur dioxide),

N-33: OClO (chlorine dioxide), ClO 2 (chlorine dioxide),

N-34: CH 3 CH 2 SH (ethanethiol), CH 3 SCH 3 (dimethyl sulfide), H 2 S 2 (hydrogen sulfide), SF 2 (sulfur difluoride),

N-38: CS 2 (carbon disulfide),

N-40: CCl 2 (dichloromethylene), S 2 O (disulfur monoxide),

N-46: MgCl 2 (magnesium dichloride),

N-48: S 3 (sulfur trimer), SiCl 2 (dichlorosilylene),

N-49: ClS 2 (sulfur chloride).

The electronic structure calculations performed in the present study for the whole set of molecules were obtained by use of correlated wavefunctions at high levels of theory.

Complexity measures

In contrast to the atomic case, where complexities exhibit a high level of natural organization due to periodicity properties [99,100], the molecular case necessitates some form of organization or classification, which can be influenced by various factors such as structural, energetic, and entropic characteristics. Therefore, we analyzed the molecular complexities, C(LMC) and C(FS), as functions of key chemical properties of interest, including total energy, dipole moment, ionization potential, hardness, and electrophilicity. By establishing a link between the different complexity measures and these chemical properties, we aimed to gain insights into the organization, order, and uncertainty features of the molecular systems.

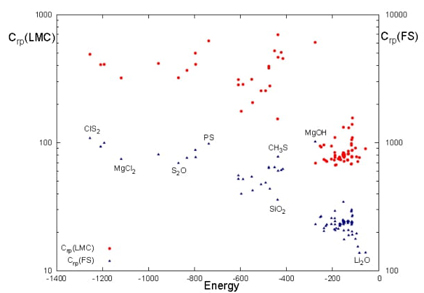

Fig. 11 C(LMC) (red circles) and C(FS) (blue triangles) complexities as a function of the total energy (a.u.) for the set of ninety molecules in the product space (rp).

In Fig. 11, we have depicted the

C(FS) and C(LMC) complexities as functions

of the total molecular energy (in atomic units) within the product space (rp).

Observations from the figure indicate that both complexity measures show similar

patterns. A noticeable trend is that molecules with higher energies tend to

exhibit increased complexity values for both C(FS) and

C(LMC), compared to those with lower energies. The

molecules analyzed are categorized into four energy intervals: E > - 400, E

These findings suggest that molecular complexity is influenced by several factors beyond energy, including molecular structure, composition, and chemical functionality, which contribute to varying degrees of complexity. For example, the lowest complexity values within each energy group typically align with molecules that share similar geometrical configurations, while higher complexities are found in molecules containing heavier atoms. Each complexity measure integrates two components: one invariably linked to Shannon entropy S , and the other differentiates the measures-disequilibrium D for C rp (LMC) and Fisher information I for C rp (FS), representing global and local perspectives, respectively. Despite these distinctions, no significant structural differences are evident between the complexities determined by D or I .

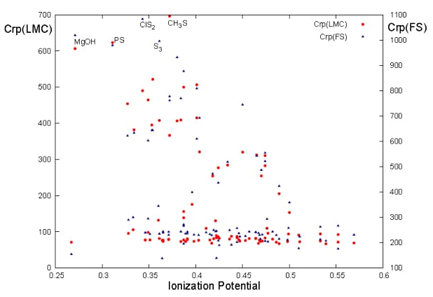

Fig. 12 C(LMC) (red circles) and C(FS) (blue triangles) complexities as a function of the ionization potential (a.u.) for the set of ninety molecules in the product space (rp).

Ionization potential (IP) is utilized to gauge the chemical stability of molecules in relation to their complexities. As depicted in Fig. 12, molecules with higher IP values, indicating greater stability, appear on the right side of the figure. This observation highlights a correlation between molecular complexities and stability: higher LMC and FS complexities are associated with molecules that are more reactive and, consequently, less stable.

Information planes

In our search for patterns and organization, we have found it useful to analyze the molecules in our study based on their energy and the number of electrons. This analysis involves plotting the contribution of information measures D (order) and L (uncertainty) to the total complexity of the LMC, as well as measures I (organization) and J (uncertainty) to the FS complexity. Figures 13 and 14 show the behavior of energy in the D p -L p plane and the effect of the number of electrons in the I p -J p planes, respectively. When analyzing the energy in the information planes, we have found it more beneficial to focus on the corresponding planes in momentum space, as the momentum density is directly linked to energy. In our publication (Ref. [91]), we presented results pertaining to the behavior of energy in the I p -J p planes and the influence of the number of electrons in the D r -L r planes.

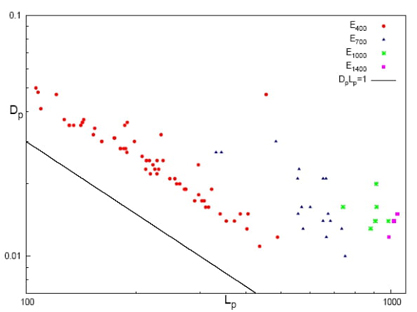

Fig. 13 Disequilibrium-Shannon plane (D-L) in momentum

space for energetically differents groups: E400 for

molecules with E > - 400 (red cicles),

E700 for E

In Fig. 13, the D

p

-L

p

plane is shown in a double-logarithmic scale for a set of molecules

grouped according to their energy intervals. The energy intervals are labeled as

E400, E700, E1000, and E1400, corresponding to different ranges of energy, i.e.,

E400 for molecules with E > -400 a.u.,

E700 for E

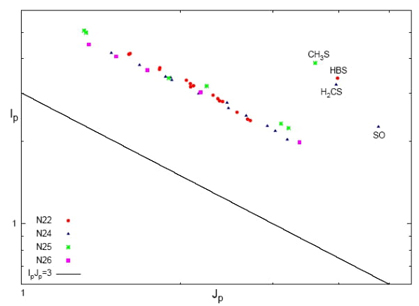

Fig. 14 Fisher-Shannon plane (I-J) in momentum space of the isoelectronic series of 22 (red cicles), 24 (blue triangles), 25 (green stars), and 26 (magenta box) electrons. Double-logarithmic scale. Lower bound (I p -J p =3) is depicted by the black line. Molecules with larger energy vaues are shown at the upper left corner of the Figure.

In the analysis of pattern and organization for the isoelectronic series, we have examined how different information measures contribute to the overall complexity of the system. Fig. 14 displays this analysis for a selection of isoelectronic molecular series with varying numbers of electrons in the momentum space: N = 22, 24, 25 and 26 electrons in the I p -J p plane. The parallel lines on the graph represent isocomplexity lines, indicating regions of equal complexity. Deviations from these lines indicate higher levels of complexity. When there is an increase in uncertainty ( J ), there is typically a corresponding decrease in accuracy (I ) along these lines. This linear relationship is observed consistently across all isoelectronic series in momentum space, as illustrated in Fig. 14. The accuracy and uncertainty values were determined through linear regression analysis, yielding high correlation coefficients for each series (N22: 0.989, N24: 0.994, N25: 0.993, N26: 0.998). It is important to note that systems outside the isocomplexity lines belong to molecules with higher complexity, as discussed earlier. These systems typically contain heavier atoms, as indicated in Figure 14, leading to higher uncertainty values (J p ) and therefore greater complexity. Furthermore, the isocomplexity lines representing the same isoelectronic molecular series exhibit significant deviations (higher complexity) from the strict lower bound, as shown in the figure. In the conjugated position space (I r -J r ), a similar trend is observed. Each isoelectronic series demonstrates a linear relationship, with exceptions for molecules with the highest complexity values that fall outside the isocomplexity lines. These complex molecules have lower values of J r and higher values of I r in contrast to the behavior observed in Fig. 14.

Despite the limitations of using all information products as complexity measures, such as the requirement of invariance under scaling, translation, and replication, we found it intriguing to examine patterns of order and organization in the I-D plane. Our analysis of these patterns is detailed in Ref. [91].

In our analysis, we found that fulminic acid (HCNO) has the lowest ionization potential compared to isocyanic acid (HNCO) and cyanic acid (HOCN). This indicates that this acid is less stable and more reactive than the other two isomers. This observation is consistent with the complexity values obtained from our analysis. From the Table 1 of chemical properties (below), we can see that fulminic acid has larger values for the complexity measures compared to isocyanic acid and cyanic acid. This suggests that fulminic acid possesses more intricate patterns of order and organization in its molecular structure. The higher complexity values indicate a higher level of complexity and structural diversity in this molecule ¡Error! No se encuentra el origen de la referencia.. Based on our previous discussion regarding the relationship between complexity measures and chemical properties, a molecule with higher complexity values is expected to be more reactive. In this case, fulminic acid, being the isomer with the highest complexity measures, aligns with this expectation and can be considered as a more reactive molecule. Overall, the analysis of the complexity values for these isoelectronic isomers provides insights into their chemical properties and corroborates the experimental knowledge regarding their stability and reactivity.

Table 1 Chemical properties and complexity measures for the isomers HCNO, HNCO and HOCN in atomic units (a.u.).

| Molecule | Energy | Ionization Potential | Hardness | Electrophilicity | Crp(LMC) | Crp(FS) |

|---|---|---|---|---|---|---|

| HCNO | -168.134 | 0.403 | 0.294 | 0.020 | 76.453 | 229.303 |

| HNCO | -168.261 | 0.447 | 0.308 | 0.031 | 74.159 | 223.889 |

| HOCN | -168.223 | 0.453 | 0.305 | 0.036 | 75.922 | 225.656 |

Our analyses show that molecular complexity is influenced by several chemical factors, including molecular structure, composition, functionality, and reactivity. Molecules with higher complexity values tend to have higher energies, larger numbers of electrons, smaller hardness values (indicating higher reactivity), and smaller ionization potential values (indicating lower stability). However, there is no clear correlation between molecular complexity and chemical reactivity, suggesting that other factors beyond complexity also play a role in determining reactivity. Furthermore, the analysis of information planes reveals that molecular energies are related to the uncertainty of the systems, as measured by L p or J p . Low-energy molecules tend to exhibit more linear behavior and are closer to the lower bounds, whereas high-energy molecules tend to deviate from the iso-ocomplexity lines. This suggests that complexity is influenced by both molecular energy and uncertainty. In summary, molecular complexity is affected by a combination of factors including structure, composition, reactivity, and energy. The analysis of information planes provides further insights into the relationship between complexity and uncertainty in molecular systems.

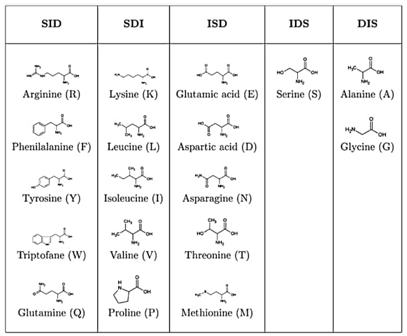

Predominant information-theoretic quality scheme (PIQS) of amino acids

To our knowledge, no prior studies have thoroughly characterized essential amino acids using information-theoretic measures of their actual electronic densities. In this research, we analyzed probability density distributions derived from wave-function calculations at the Hartree-Fock (HF) and Configuration Interaction (CI) levels of theory. We computed several metrics: Shannon entropy, Fisher information, disequilibrium, and the FS and LMC complexities to categorize amino acids based on their physicochemical traits. The objectives of this study are multifaceted: firstly, to describe amino acids through their inherent information content, utilizing measures that reflect delocalization, narrowness, and order; secondly, to develop a comprehensive classification system for amino acids based on their biochemical properties; and thirdly, to explore potential connections between information measures and reactivity parameters. Results have been published in Ref. [95].

Computational details

In this study, we performed electronic structure calculations for a complete set of amino acids using the Gaussian 09 software suite [62] at the Hartree-Fock (HF), DFT-M062X, and CISD(Full) levels of theory with the 6-311+G(d,p) basis set. We initially conducted geometry optimizations on 18 amino acids extracted from the 1C3X protein, considering five distinct conformations for each amino acid (excluding cysteine and histidine, which are absent from this protein). To maintain the integrity of the original biological context, we restricted our optimizations to only the hydrogen atoms while preserving the skeletal structure of each conformation. These optimizations were carried out at the HF level using the specified basis set. Subsequent single-point calculations at the CISD(Full) level were then performed to determine all the information-theoretic properties relevant to our analysis. It should be noted that the selection of only five conformers for each amino acid in this study is arbitrary but serves to evaluate the significance of geometric conformations within the protein. The primary aim of this research is to compute information-theoretic measures in position space, which were determined at the CI level.