Corresponding author is Jose P. Perez.

1 Introduction

Since the most famous random graph model was proposed by Erdös and Rényi [1], the complex network has attracted much attention in many fields of research, such as biology, physics, computer networks, the World Wide Web (WWW) [2], and so on. Network synchronization has obvious advantages, it has great application value in practice. Therefore, Atay et al. [3] studied synchronization of complex network when delays exist among the nodes; Motter et al. [4] studied the influence of coupling strength on the synchronizing ability of a complex network; Timme et al. [5] studied the web synchronization law of pulse-coupled dynamical systems; Checco et al. [6] studied the synchronization of random web. Lü et al. [7] constructed general complex dynamical networks and studied the synchronization; Lu and Chen [8] studied synchronization analysis of linearly coupled networks of discrete time systems; Han and Lu [9] studied the changes of synchronization ability of coupled networks from ring networks to chain networks; He and Yang [10] studied adaptive synchronization in nonlinearly coupled dynamical networks.

The analysis and control of complex behavior in complex networks, which consist of dynamical nodes, has become a point of great interest in recent studies, [11, 12, 13]. The complexity in networks comes from their structure and dynamics but also from their topology, which often axoects their function. Recurrent neural networks have been widely used in the fields of optimization, pattern recognition, signal processing and control systems, among others. They have to be designed in such a way that there is one equilibrium point that is globally asymptotically stable. In biological and artificial neural networks, time delays arise in the processing of information storage and transmission. Also, it is known that these delays can create oscillatory or even unstable trajectories. Trajectory tracking is a very interesting problem in the field of theory of systems control; it allows the implementation of important tasks for automatic control such as: high speed target recognition and tracking, real-time visual inspection, and recognition of context sensitive and moving scenes, among others.

The motivation in this paper lies in the complex network synchronization and chaos control importance. Network synchronization is one of the most practical and valuable issues. A synchronization of network means the situation in which the output of all nodes in the study of the complex network is consistent with any given external input signal under a certain condition. Numerical simulations are used to verify the effectiveness of the proposed techniques. We present the results of the design of a control law that guarantees the tracking of general complex dynamical networks.

2 Mathematical Models

2.1 General Complex Dynamical Network

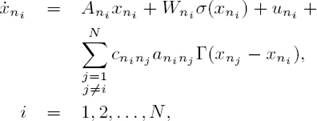

Consider a network consisting of N linearly and diffusively coupled nodes, with each node being an n-dimensional dynamical system, described by

where x i = (x i1 , x i2 , . . . , x in ) T ∈ ℝ n are the state vectors of node i, f i : ℝ n 7 ↦ ℝ n represents the self-dynamics of node i, constants c ij > 0 are the coupling strengths between node i and node j, with i, j = 1, 2, . . . , N. Γ = (τij) ∈ ℝ n×n is a constant internal matrix that describes the way of linking the components in each pair of connected node vectors (x j − x i ): that is to say for some pairs (i, j) with 1 ≤ i, j ≤ n and τij ≠ 0 the two coupled nodes are linked through their ith and jth sub-state variables, respectively, while the coupling matrix A = (a ij ) ∈ ℝ N×N denotes the coupling configuration of the entire network: that is to say if there is a connection between node i and node j(i ≠ j), then a ij = a ji = 1; otherwise a ij = a ji = 0.

2.2 Recurrent Neural Network

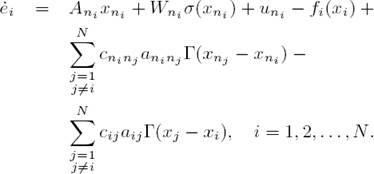

Consider a recurrent neural network in the following form:

where

3 Trajectory Tracking

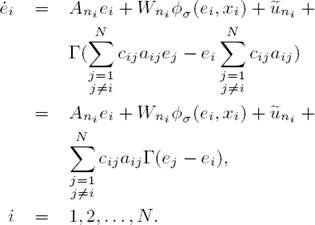

The objetive is to develop a control law such that the ith neural network (2) tracks to the trajectory of the ith dynamical system (1). We define the tracking error as e i = x ni − x i , i = 1, 2, . . . , N whose time derivative is

Substituting (1) and (2) in (3), we obtain

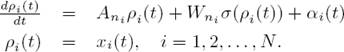

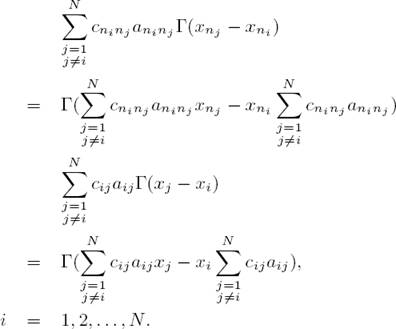

Adding and substracting

In order to guarantee that the ith neural network (2) tracks the ith reference trajectory (1), the following assumption has to be satisfied:

Assumption 1. There exist functions ρ i (t) and α i (t), i = 1, 2, . . . , N, such that

Let define

Considering (6) and (7), the equation (5) is reduced to

Rewriting the summations as

also taking into account that

It is clear that e

i

= 0, i = 1, 2, . . . , N is an equilibrium point of (10), when  , i = 1, 2, . . . , N. In this way, the tracking problem can be restated as a global asymptotic stabilization problem for system (10).

, i = 1, 2, . . . , N. In this way, the tracking problem can be restated as a global asymptotic stabilization problem for system (10).

4 Tracking Error Stabilization and Control Design

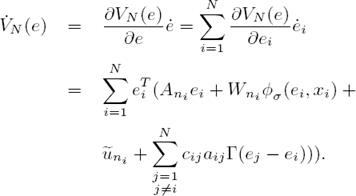

In order to establish the convergence of (10) to ei = 0, i = 1, 2, . . . , N, which ensures the desired tracking, first, we propose the following candidate Lyapunov function

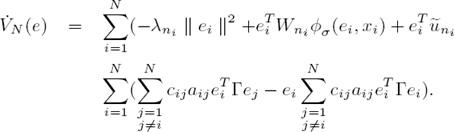

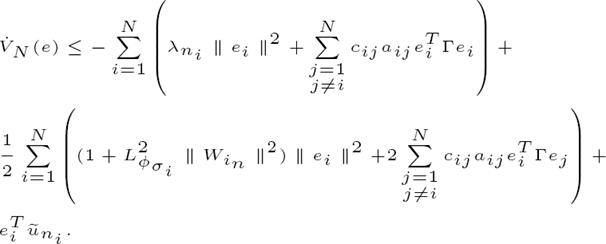

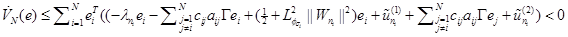

The time derivative of (11), along the trajectories of (10), is

Reformulating (12), we get

Next, let consider the following inequality, proved in [16, 17]:

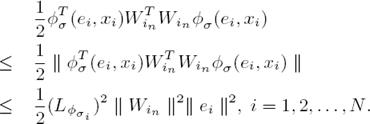

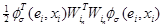

which holds for all matrices X, Y ∈ ℝ n×k and Λ ∈ ℝ n×n with Λ = Λ T > 0. Applying (14) with Λ = I n×n to the term e i T W in φ σ (e i , x i ), i = 1, 2, . . . , N we get

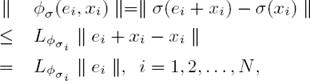

Then we have that, and taking into account that φ σ is Lypchitz:

with Lipschitz constant  . Applying (16) to

. Applying (16) to  we obtain

we obtain

By simplifying (15), we obtain

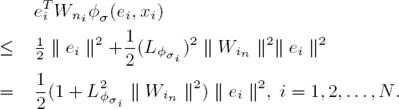

Then we have that:

We define  e, i = 1, 2, . . . , N, then (19) becomes in

e, i = 1, 2, . . . , N, then (19) becomes in

Now, we propose to use the following control law:

This means that the proposed control law (21) can globally and asymptotically stabilize the ith error system (10), thereby ensuring the tracking of (1) by (2). Finally, the control action driving the recurrent neural networks is given by

5 Simulations

In order to illustrate the applicability of the discussed results, we consider a dynamical network with just one Lorentz’s node and three identical Chen’s nodes. The single Lorentz´s system is described by

and the Chen’s oscillator is described by Eq. 24:

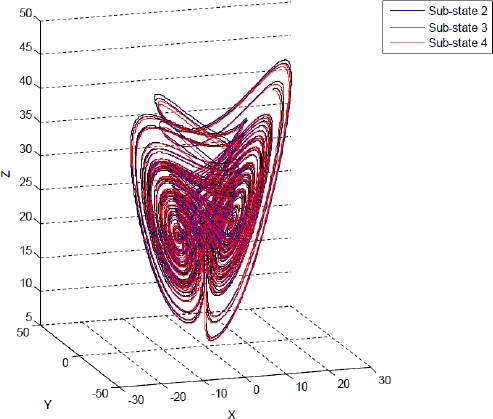

If the system parameters are selected as p 1 = 35, p 2 = 3, p 3 = 28, then the Lorentz’s system and Chen’s system are shown in Fig. 1 and Fig.2 respectively. In this set of system parameters, one unstable equilibrium point of the oscillator (24) is x = (7 : 9373; 7 : 9373; 21)T .

Suppose that each pair of two connected Lorentz and Chen’s oscillators are linked together through their identical sub-state variables, i.e., Γ = diag(1, 1, 1), and the coupling strengths are c 12 = c 21 = π, c 13 = c 31 = π, c 23 = c 32 = π, c 14 = c 41 = 2π, c 24 = c 42 = 2π, c 34 = c 43 = 2π. Fig. 3 visualizes this entire dynamical network:

The neural network was selected as

Theorem 1 For the unknown nonlinear sys-tem modeled by (1), the on-line learning law tr {W T W} = −e T W σ(x) and the control law (22) ensure the tracking of to the nonlinear reference model (2).

Remark 2

From (20) we have , ∀ e ≠ 0, ∀W, and therefore V is decreasing and bounded from below by V (0). Since

, ∀ e ≠ 0, ∀W, and therefore V is decreasing and bounded from below by V (0). Since  , then we conclude that e, W ∈ L

1;

this means that the weights remain bounded.

, then we conclude that e, W ∈ L

1;

this means that the weights remain bounded.

The experiment is performed as follows. Both systems, the recurrent neural network (2) and the dynamical networks (24) and (25), evolve independently; at that time, the proposed control law (23) is incepted. Simulation results are presented in Fig. 4 - Fig. 6 for sub-sates of node 1. As can be seen, tracking is successfully achieved and error is asymptotically stable, as it is shown in Fig. 7 -Fig. 9 for sub-states of node 4.

6 Conclusions

We have presented the controller design for trajectory tracking determined by a general complex dynamical network. This framework is based on dynamic recurrent neural networks and the methodology is based on Lyapunov theory. The proposed control is applied to a dynamical network with each node being a Lorenz and Chen’s dynamical system, respectively, being able to also stabilize in asymptotic form the tracking error between two systems. The results of the simulation shows clearly the desired tracking. In future work, we will consider the stochastic case for the complex dynamical network.

nova página do texto(beta)

nova página do texto(beta)